BOTSv3 Blue Team CTF

Dec 2025Blue team CTF scenarios testing incident response and threat hunting

About This Benchmark

Sample Questions

Q: What external client IP address is able to initiate successful logins to Frothly using an expired user account?

A: 199.66.91.253

Q: Bud accidentally makes an S3 bucket publicly accessible. What is the event ID of the API call that enabled public access?

A: ab45689d-69cd-41e7-8705-5350402cf7ac

Methodology

Scoring

- Accuracy: Case-insensitive exact match against ground truth answers

- Cost: USD per task based on token usage (excluding prompt caching)

- Latency: Wall-clock time to complete each task

- Completion: Percentage of tasks finished without unrecoverable errors

Setup

- Built a Splunk Enterprise instance and indexed the full BOTSv3 dataset

- Agents given access to three tools: search, listDatasets, and describeSourceType

- Tasks require agents to query Splunk, analyze results, and produce a final answer

Controls

- Same minimal system prompt for all models, no per-model tuning

- "Thinking" mode enabled where available

- Agent loops capped at 100 iterations (tools removed to force an answer)

- Some questions modified to include context that humans would have in a CTF setting

- Questions requiring web search or browser access were removed

Key Findings

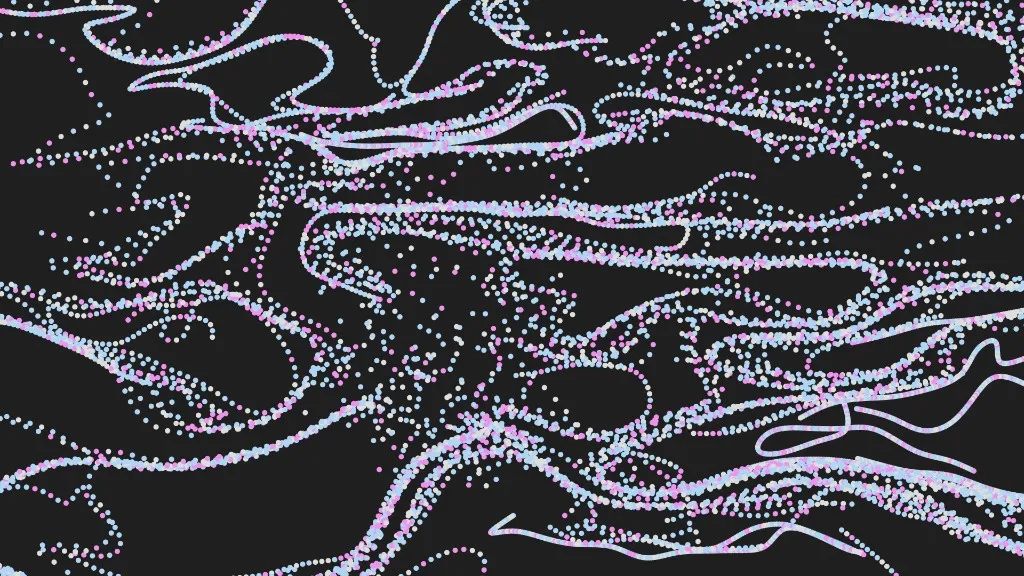

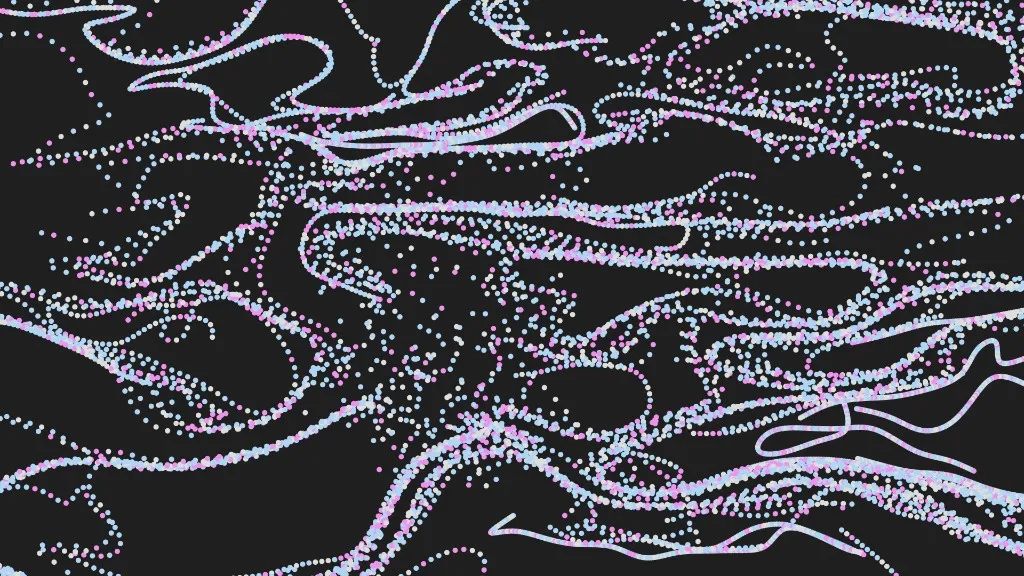

Accuracy

GPT-5.2 achieved the highest accuracy at ~69%, followed by GPT-5.1 and Opus 4.5 at 65%. GPT-5 and Sonnet 4.5 scored 63% and 61% respectively. Among open-weight models, Qwen3 Coder led at 43%, while MiniMax M2 and GPT-OSS-120b ranged from 25-29%.

Accuracy by Model

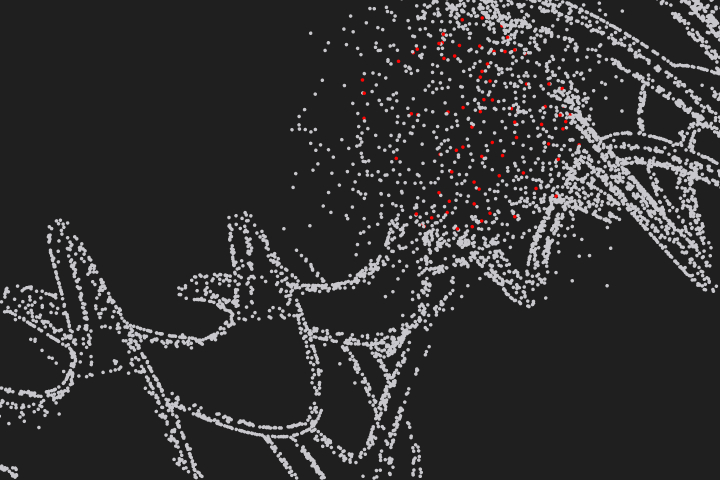

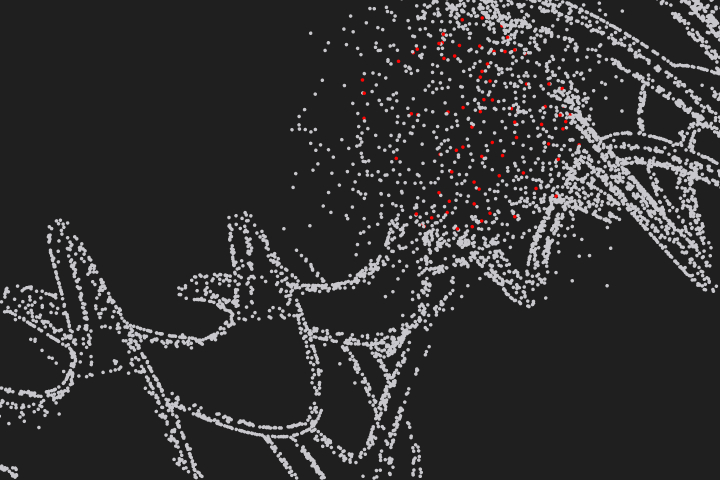

Speed

Opus 4.5 was the fastest competitive model at just 113s average, roughly half the time of Haiku 4.5 (240s), despite presumably being a larger model. This suggests reasoning efficiency can outweigh raw inference latency in long-horizon agentic tasks.

Task Duration (avg)

Cost

GPT-5.1 delivered 65% accuracy at just ~$1.67/task, the best cost-to-accuracy ratio among frontier models. GPT-5.2 cost ~$4.03/task for 69% accuracy, while Opus 4.5 cost ~$5.14/task for 65%. Among open-weight models, Qwen3 Coder offered strong value at ~$0.22/task (43% accuracy), with MiniMax M2 and GPT-OSS-120b even cheaper at ~$0.15 and ~$0.10/task respectively.

Cost per Task

Token Efficiency

Among frontier models, GPT-5 was the most token-efficient at ~793K per task, while Sonnet 4.5 consumed ~2.4M, over 3x more. GPT-5.2 (2.1M) and GPT-5.1 (1.2M) were notably less efficient than their predecessor. Among smaller models, GPT-5 Mini led at just ~87K tokens per task, followed by DeepSeek v3.2 (126K) and GPT-OSS-120b (198K).

Token Usage per Task

Reliability

Most models achieved 100% task completion, including GPT-5.2, GPT-5.1, GPT-5, Sonnet 4.5, Haiku 4.5, GPT-5 Mini, Qwen3 Coder, MiniMax M2, GPT-5 Nano, and DeepSeek v3.2. However, some models suffered from many unrecoverable errors, particularly GPT-OSS-120b (69% completion) and the Gemini models (Gemini 3.0 Pro at 92%, Gemini 2.5 Pro at 84%, and Gemini 2.5 Flash at 88%). This suggests potential struggles with long-context log investigation tasks.

Task Completion Rate

Model Recommendations

- GPT-5.2 — Best for most blue team investigations. Highest accuracy at 69% with 100% task completion reliability.

- GPT-5.1 — Best value for top-tier accuracy. Achieves 65% accuracy at ~1/3 the cost of Opus 4.5 with 100% reliability.

- Claude Opus 4.5 — Best for time-critical investigations where speed is paramount. Fastest model tested with 65% accuracy, though at higher cost.

- Qwen3 Coder — Best for cost-sensitive investigations requiring moderate accuracy. Achieves 43% accuracy at ~$0.22/task with 100% reliability.

- Claude Haiku 4.5 — Best for interactive triage and real-time alert enrichment. Good balance of speed (240s), accuracy (51%), and 100% reliability at low cost.

Caveats

- Some questions were modified to include context that a human would have possessed in the original CTF setting.

- Questions requiring web search or browser access were removed to focus the evaluation on SIEM-based investigation.

- Cost estimates exclude prompt caching benefits, which can substantially reduce effective cost in production.

Other Benchmarks

BlueBench-Intrusion-001: Real macOS infostealer intrusion spanning incident response, threat hunting, and detection engineering

Real CTF challenges from CSAW competitions covering reverse engineering, forensics, and miscellaneous problem-solving

Defensive security CTF challenges testing forensics, reverse engineering, and miscellaneous security skills

Multi-label classification of MITRE ATT&CK tactics and techniques from Sigma rules

Multiple-choice cybersecurity knowledge evaluation across 10,000 questions

AI for the blue team.

Run Cotool's harness in your environment to get real security work done