Sigma Detection Classification

Jan 2026Multi-label classification of MITRE ATT&CK tactics and techniques from Sigma rules

About This Benchmark

Sample Tasks

title: File Decoded From Base64/Hex Via Certutil

logsource:

category: process_creation

product: windows

detection:

selection:

Image|endswith: '\\certutil.exe'

CommandLine|contains:

- '-decode'

- '-decodehex'

condition: selectiontitle: Suspicious PowerShell Download Cradle

logsource:

category: ps_script

product: windows

detection:

selection:

ScriptBlockText|contains:

- 'IEX'

- 'Invoke-Expression'

- 'DownloadString'

condition: selectionMethodology

Scoring

- F1 Score: Hierarchical F1 score accounting for MITRE technique hierarchy (partial credit for parent techniques)

- Precision: Proportion of predicted techniques that are correct

- Recall: Proportion of techniques that were correctly predicted

- Cost: USD per sample based on token usage

Task Design

- Models receive a Sigma rule (title, description, detection logic) with MITRE tags stripped to prevent label leakage

- Output is parsed as a comma-separated list of technique IDs (e.g., T1059, T1059.001)

- Ground truth comes from the official attack.* tags authored in Sigma rules

- Single-turn prediction tests intrinsic knowledge, no tool use or external lookups

Hierarchical Scoring

- MITRE techniques follow a parent/sub-technique hierarchy (e.g., T1059 → T1059.001)

- Exact match or more specific prediction (sub-technique when parent expected): 1.0 score

- Less specific prediction (parent when sub-technique expected): 0.75 partial credit

- Optimal greedy matching assigns predictions to ground truths maximizing total score

- Hierarchical precision = (sum of match scores) / (number of predictions)

- Hierarchical recall = (sum of match scores) / (number of ground truths)

- This approach rewards semantic understanding of attack relationships over exact memorization

Controls

- Same prompt template for all models, no per-model tuning

- Models instructed to output ONLY comma-separated technique IDs (e.g., T1078, T1003.001)

Prompt Template

Key Findings

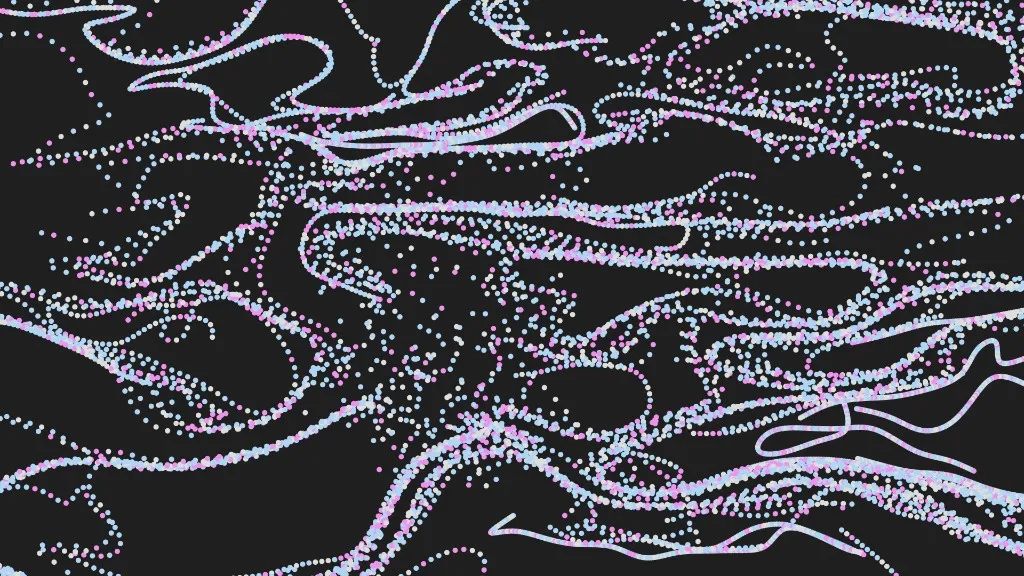

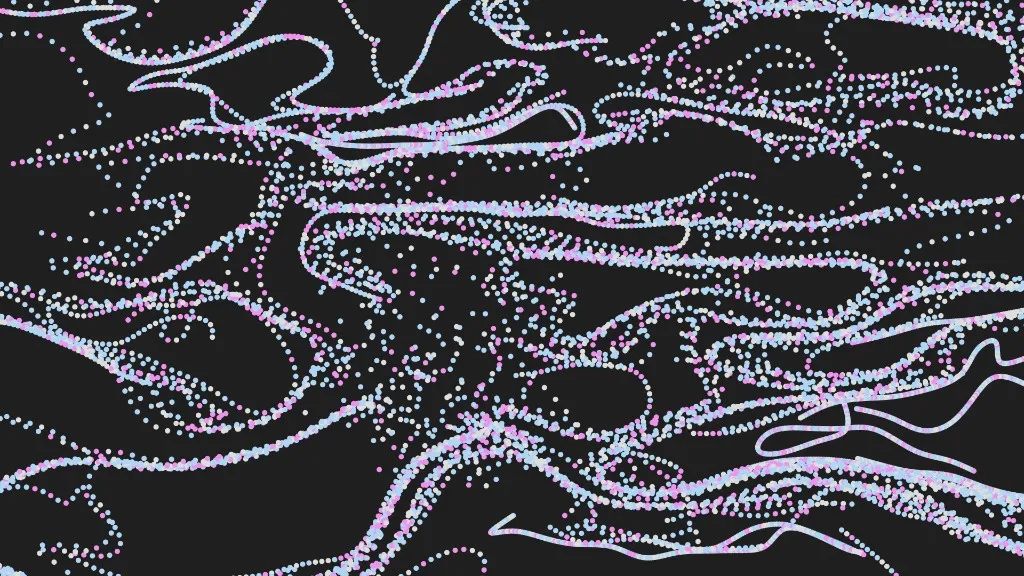

F1 Score

Claude Opus 4.5 achieved the highest hierarchical F1 score at 66%, followed by Sonnet 4.5 at 60% and a cluster of models around 56-57% (Gemini 3.0 Pro, Gemini 3.0 Flash, GPT-5.2). Among open-weight models, DeepSeek v3.2 led at 48% F1.

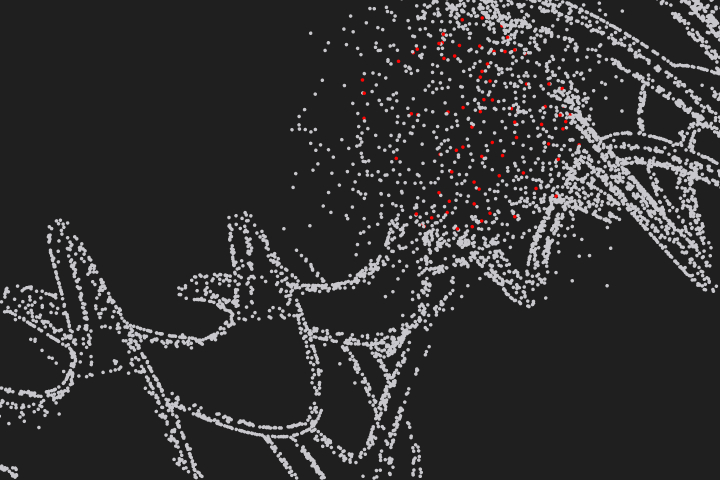

F1 Score by Model

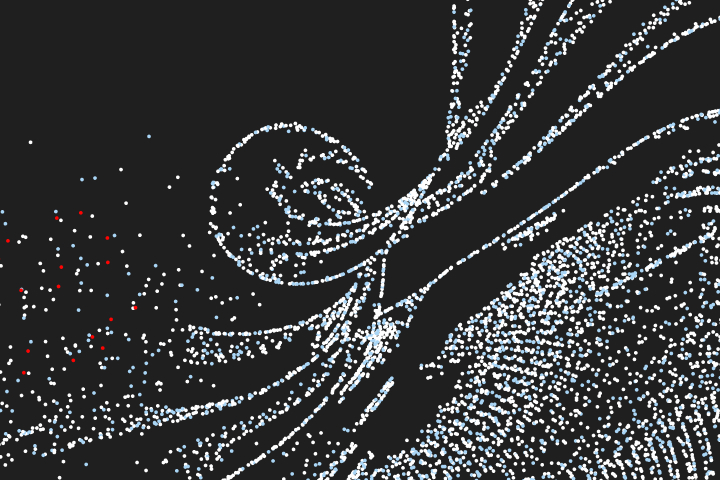

Precision vs Recall

Since ground truth labels are community-contributed and often incomplete, recall is the more reliable metric. It measures coverage of human-intended labels, while precision is penalized when models correctly identify techniques the author simply forgot to tag. Gemini 3.0 Flash achieved the highest recall at 71%, followed by Opus 4.5 (69%) and Sonnet 4.5 (69%). Among open-weight models, DeepSeek v3.2 led with 49% recall.

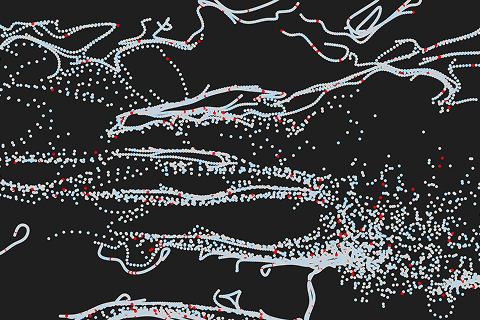

Precision vs Recall

Top-right is best (high precision and recall)

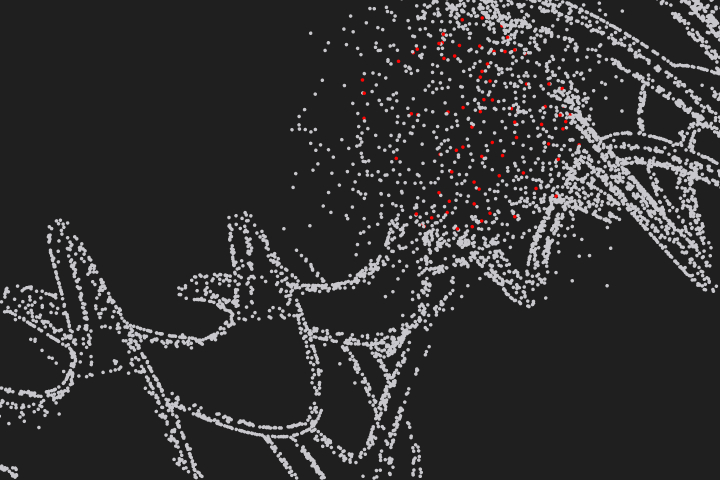

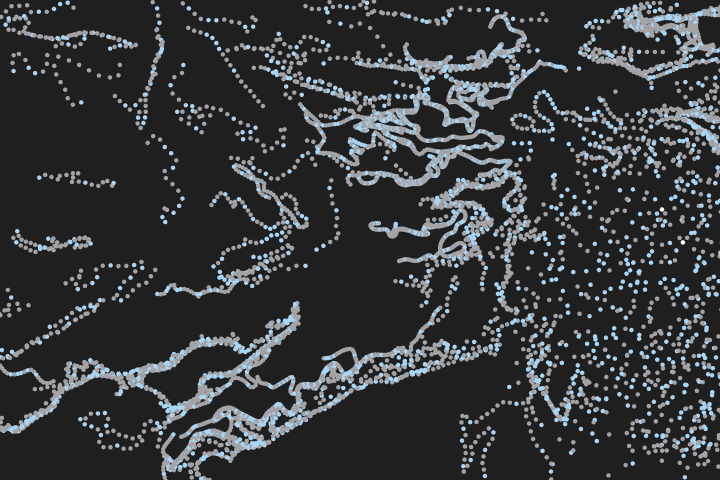

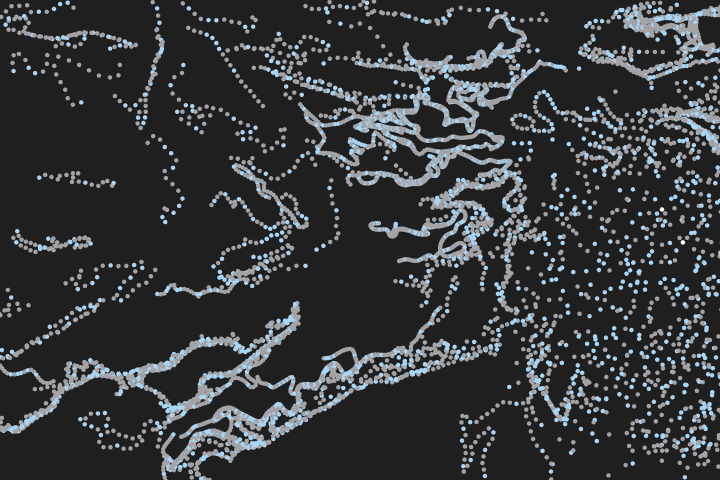

Cost Efficiency

Gemini 3.0 Flash offered the best frontier cost efficiency at ~$0.39/1000 samples with 56% F1. Among open-weight models, DeepSeek v3.2 was remarkably cheap at ~$0.08/1000 samples with 48% F1, nearly 5x cheaper than Gemini Flash. For highest accuracy, Opus 4.5 cost ~$3.84/1000 samples for 66% F1.

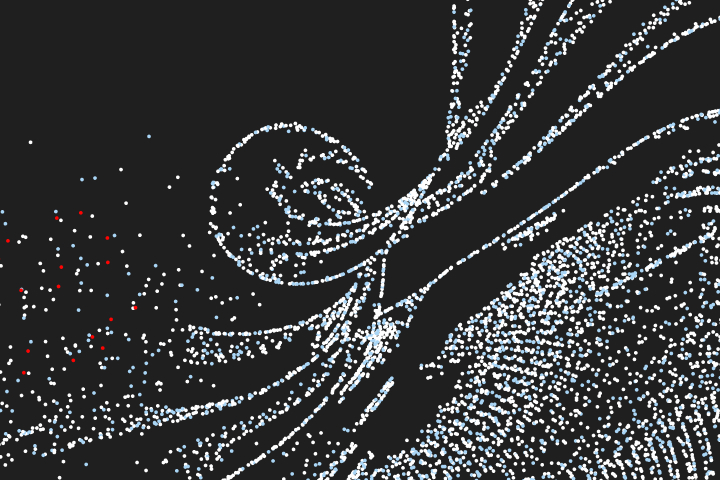

Cost per Task

Speed

Haiku 4.5 was the fastest frontier model at 0.75s average per sample, followed by GPT-5.2 at 1.2s. Among open-weight models, Qwen3 235B was fastest at 0.97s, though with lower accuracy (38% F1). DeepSeek v3.2 offered a better speed/accuracy trade-off at 1.9s with 48% F1.

Task Duration (avg)

Model Recommendations

- Gemini 3.0 Flash — Best for maximizing recall. Highest recall (71%) at just ~$0.39/1000 samples. Ideal when missing a technique is costlier than over-predicting.

- Claude Opus 4.5 — Best overall F1 (66%) with strong recall (69%). Choose when you need balanced precision and recall.

- Claude Sonnet 4.5 — Strong recall (69%) at lower cost than Opus. Good balance for production detection enrichment.

- DeepSeek v3.2 — Best open-weight value at ~$0.08/1000 samples. Usable recall (49%) at nearly 50x cheaper than Opus.

- GPT-5.2 — Fast frontier option with 63% recall at 1.2s latency. Good for high-throughput pipelines.

Caveats

- Ground truth labels are community-contributed: rule authors may annotate only a subset of applicable TTPs, making precision a noisy metric. A "false positive" may actually be a valid technique the author omitted.

- Recall is the more reliable indicator of model quality, measuring how well the model covers the techniques the human author explicitly intended to assign.

- Hierarchical scoring awards 0.75 partial credit for parent techniques, which may inflate scores compared to strict exact-match evaluation.

- Some Sigma rules have ambiguous or overly broad MITRE mappings in the ground truth.

Other Benchmarks

BlueBench-Intrusion-001: Real macOS infostealer intrusion spanning incident response, threat hunting, and detection engineering

Real CTF challenges from CSAW competitions covering reverse engineering, forensics, and miscellaneous problem-solving

Defensive security CTF challenges testing forensics, reverse engineering, and miscellaneous security skills

Blue team CTF scenarios testing incident response and threat hunting

Multiple-choice cybersecurity knowledge evaluation across 10,000 questions

AI for the blue team.

Run Cotool's harness in your environment to get real security work done