macOS Threat Investigation

Mar 2026BlueBench-Intrusion-001: Real macOS infostealer intrusion spanning incident response, threat hunting, and detection engineering

About This Benchmark

Sample Questions

Q: What persistence artifact was installed? Provide the full plist path.

A: /Library/LaunchDaemons/com.*****.plist (redacted)

Q: What built-in utility is used to decode content immediately before the main payload executes?

A: base64 -d

Q: Write and validate a correlation query that ties local credential validation to a subsequent privileged action within a short time window.

A: [SQL query — executed against the live dataset to validate detection coverage]

Methodology

Scoring

- Accuracy: LLM-judged 0–1 per question using a weighted rubric: technical accuracy (60%), completeness (25%), specificity (15%)

- Cost: USD per task based on token usage

- Latency: Wall-clock time to complete each investigation track

- Completion: Percentage of tracks finished without unrecoverable errors

Setup

- Logs from a real macOS intrusion loaded into a sandboxed query environment

- Agents given SQL-based query tools to investigate 416K+ events across 14 log sources

- 36 scored questions across three investigation tracks: incident response, threat hunting, and detection engineering

- Questions range from alert triage and kill-chain reconstruction to containment decisions and detection rule authoring

Controls

- Same minimal system prompt for all models, no per-model tuning

- "Thinking" mode enabled where available

- Agent loops capped at 40 iterations (tools removed to force an answer)

- All models given identical tool access and data

Key Findings

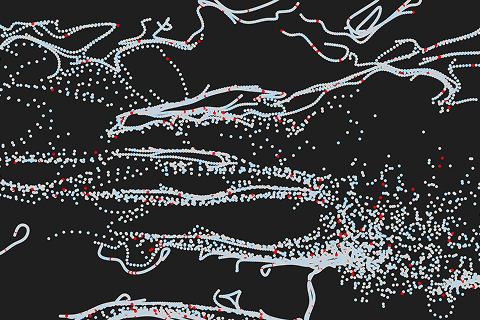

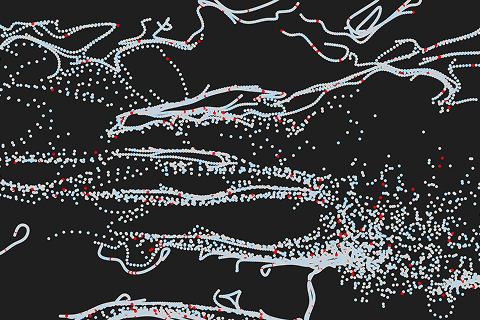

Accuracy (All Tracks)

GPT-5.4 led at 87%, followed by Opus 4.6 (84%) and GPT-5.3 Codex (82%). GPT-5.4 was the most consistent across tracks (83-92%), while Gemini 3.1 Pro scored 89% on threat hunting but dropped to 57-58% elsewhere.

Accuracy by Model

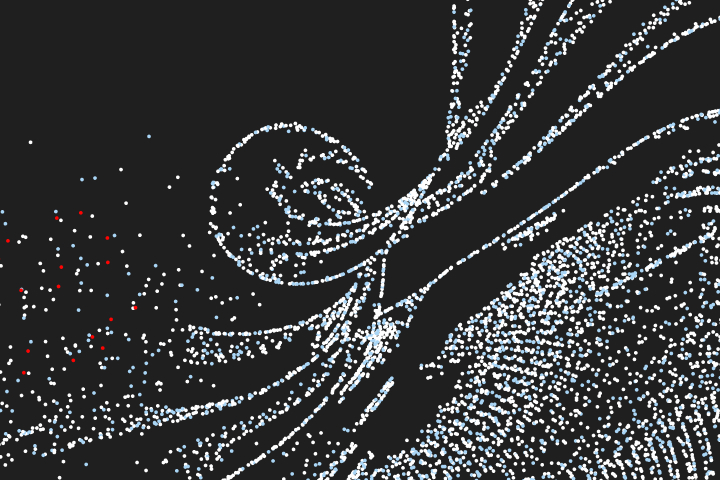

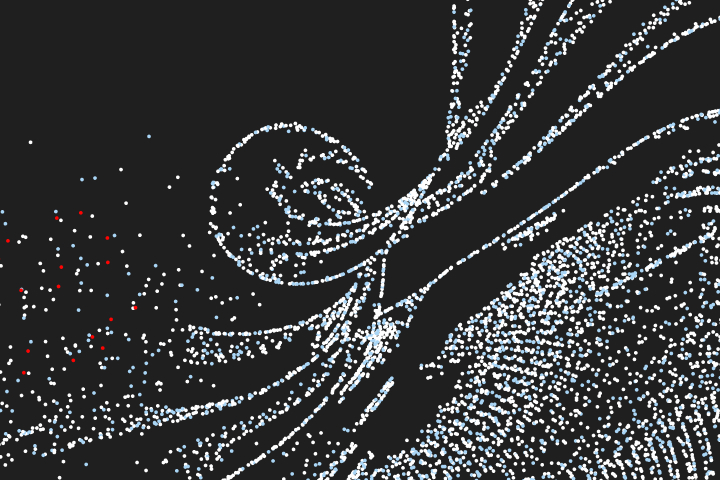

Track Breakdown

Each track rewarded different strengths. IR was hardest (top score 83%). Gemini 3.1 Pro dominated threat hunting at 89%. GPT-5.4 and Opus 4.6 tied at 92% on detection engineering. One question stumped every model: locating the hidden credential cache path.

Cost (All Tracks)

IR was the most expensive track across models. Kimi K2.5 had the best value at $0.36/task for 68% accuracy, roughly 8x cheaper than GPT-5.4. GPT-5.4 Mini hit $0.23/task on detection engineering while still scoring 71%.

Cost per Task

Speed (All Tracks)

GPT-5.4 Mini finished tracks in ~5 minutes. GPT-5.4 and GPT-5.3 Codex averaged ~37 minutes. Gemini 3.0 Flash was slowest at 89 minutes per track.

Task Duration (avg)

Reliability (All Tracks)

Eight of nine models achieved 100% completion. Gemini 3.1 Pro had 2 failures out of 40 runs (95%).

Task Completion Rate

Model Recommendations

- GPT-5.4 — Best for high-stakes investigations. Highest accuracy at 87% with no weak tracks (83-92%) at ~$2.81/task.

- Kimi K2.5 — Best value overall. Achieves 68% accuracy at ~$0.36/task with 100% reliability, and scores 79% on threat hunting specifically.

- GPT-5.4 Mini — Best for rapid triage. Completes tracks in ~5 minutes and scores 71% on detection engineering at just $0.23/task.

- Gemini 3.1 Pro — Best for threat hunting. Scores 89% on that track (highest of any model on any track) at $1.63/task, but weaker on IR and detection engineering.

- Claude Opus 4.6 — Second highest accuracy at 84%, tied for best on detection engineering (92%). Strongest pick in the Anthropic ecosystem.

Caveats

- Analysis questions use LLM-judged scoring, which introduces some variability compared to exact-match evaluation.

- Costs are measured per task (12 tasks per track).

- Gemini 3.1 Pro was in preview at the time of evaluation with tight rate limits and high API response latency. Its wall-clock times may not reflect production performance.

Other Benchmarks

Real CTF challenges from CSAW competitions covering reverse engineering, forensics, and miscellaneous problem-solving

Defensive security CTF challenges testing forensics, reverse engineering, and miscellaneous security skills

Blue team CTF scenarios testing incident response and threat hunting

Multi-label classification of MITRE ATT&CK tactics and techniques from Sigma rules

Multiple-choice cybersecurity knowledge evaluation across 10,000 questions

AI for the blue team.

Run Cotool's harness in your environment to get real security work done